The surface of our pale blue dot is changing. The effects of climate change and subsequent disastrous events are covered at length in popular media and academic journals. However, with growing climate awareness, individuals and organizations are beginning to fulfill our collective responsibility to leave the planet in better shape for future generations. This change is also visible from above.

Treaties like the Paris Climate Accord show the willingness of nations to contribute to the cause. Whether it's governments moving away from fossil fuels and making commitments around renewable energies or the power of an individual wanting to play a role, it is remarkable and inspiring to witness the results.

This post will look at this phenomenon in action by analyzing changes in land cover over time on high-resolution satellite imagery.

Land Cover Classification

Land cover classification addresses the physical representation of materials on the Earth's surface seen from above. Over the years, many government agencies have produced land cover maps over their respective countries. There have also been efforts to produce global land cover maps with varying resolutions.

For example, National Land Cover Database (NLCD), produced by Multi-Resolution Land Characteristics Consortium from USGS, is a widely-known land cover classification map over the USA. These products are produced using decadal Landsat imagery fused with other supplementary datasets. Another such example is a large-scale 20m resolution prototype land cover map with ten generic classes over the continent of Africa produced by ESA.

Such land cover maps are very useful when it comes to observing large-scale changes over time. However, with an increase in the resolution of commercial satellite imagery and advancements in deep learning techniques, efforts have been made to produce high-resolution land cover maps. For example, Microsoft has recently released a high-resolution land cover map based on NAIP imagery over the USA under its AI for Earth initiative.

Land Cover Classification at UP42

At UP42, we have recently developed an algorithm published as a processing block on our marketplace that detects the land cover classes for high-resolution SPOT and Pleiades images. The block leverages a deep learning technique known as semantic segmentation, where each pixel in an image is assigned a class. We trained the classifier on SPOT and Pleiades imagery over parts of the USA, Africa, and selected portions of Asia and Europe. The block now supports five classes:

| Class ID | Class Name | ColorMap |

|---|---|---|

| 1 | Water | Blue |

| 2 | High Vegetation (Including Trees) | Dark Green |

| 3 | Low Vegetation (including bushes and grass) | Light Green |

| 4 | Barren Land | Dark Yellow |

| 5 | Urban | Red |

We used two metrics for model evaluation - accuracy and Jaccard Score (IoU). Accuracy is a measure of how many pixels were classified correctly, conforming to the test data set. Jaccard Score (IoU) is a similarity measure. The ratio of the number of correctly predicted values to the number of wrong remaining values of predicted and ground truth pixels. For more information on similarity measures, you can check out our colleague, Niku Ekhtiari, Ph.D.'s article on Comparing Ground Truth With Predictions Using Image Similarity Measures.

We tested our model with independent test data sets scattered around the globe, achieving an accuracy of 0.64 and IoU of 0.51. As with any machine-learning task, the models evolve and improve as more labeled data becomes available. Given spectral diversity in the different parts of the world and climate zones, the model might misclassify certain classes in some areas.

Restoring Pakistan’s Lost Forests

Pakistan is in the first phase of an ambitious plan to plant 10 billion trees. In a recent article published by Bloomberg, a few areas were highlighted to support the reforestation project's progress. What makes this project different is that trees are planted in recreational areas and on any available bare land.

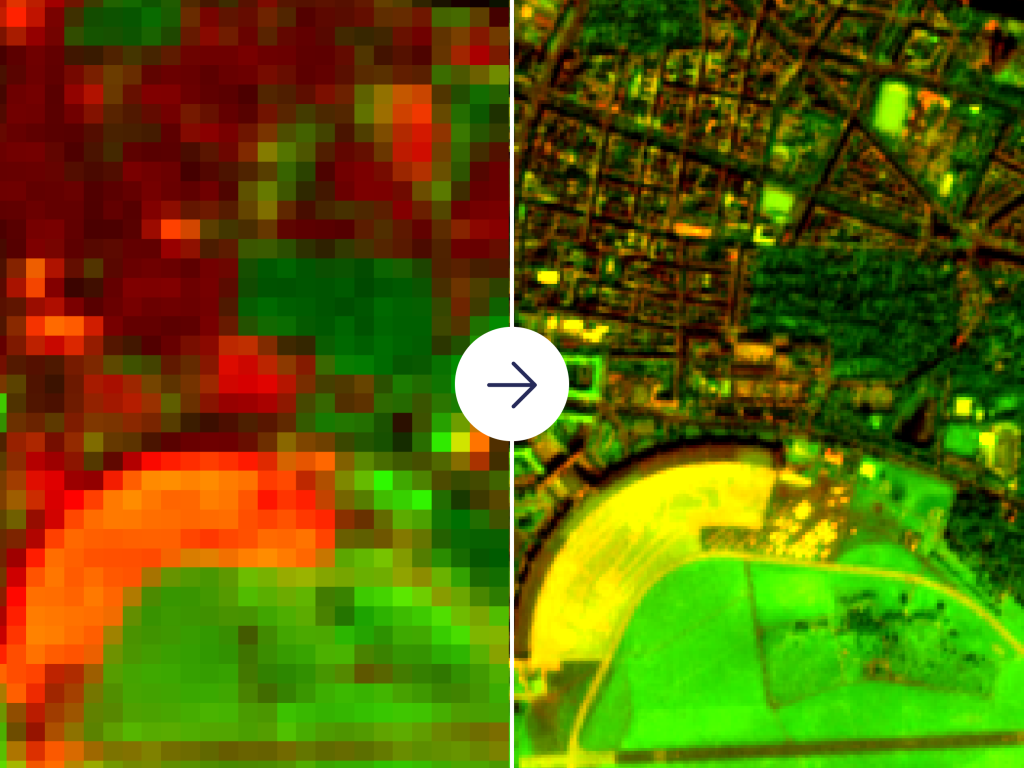

One of the focus areas is an intersectional bypass located in the Northern part of Karachi, Pakistan. We took Pleiades images from 2017 to 2020 and ran the land classification algorithm. To minimize the impact of the different vegetation phases, we used images taken in February and March. Here you can see the visual results of the algorithm.

Additionally, we extracted data from the visual imagery to create a time series that further illustrates the increase in vegetation from 2017 to 2020.

Please note that the low vegetation could be significantly different because of weather conditions. The following chart represents the coverage of "High Vegetation" class supporting the hypothesis of increasing values.

In some ways, this analysis isn't unique. The free and easily-accessible nature of satellite data sets, such as Sentinel or Landsat, has enabled many geospatial analysts to conduct land cover classification at scale. Indeed, these lower-resolution data sets provide huge value for global monitoring.

However, as urban spaces become an increasingly important area for regeneration, tracking progress with high-resolution data sets and compatible algorithms is vital.

Do you have an urban regeneration project that you would like to monitor? Sign up to UP42 today to purchase, access, and analyze SPOT and Pleiades data.