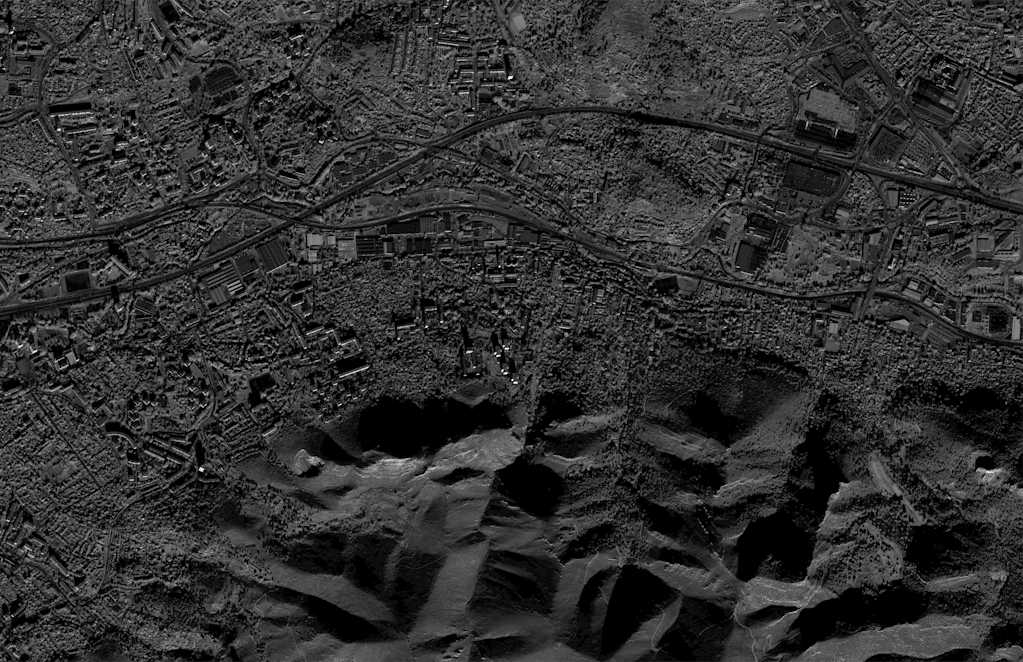

Multispectral satellite

Capture imagery throughout the visible and near-visible spectrum, including multispectral and hyperspectral. In up to 30 cm resolution or 15 cm pansharpened, with data from Airbus, Vantor (formerly Maxar), ISI, Planet, Satellogic, SIIS, 21AT, and more.